|

If you instead want to install GitLab on.Containers, along with orchestrators such as Kubernetes, have ushered in a new era of application development methodology, enabling microservices architectures as well as continuous development and delivery. We plan to work to improve it in the future.The GitLab Docker images are monolithic images of GitLab running all the necessary services in a single container. The workflow that you have works with the workaround that I gave you, but it is clearly not an optimal user-experience. I just mean that Docker for Mac today only works out-of-the box if you only have one user or if you switch between users and you re-run Docker.app with the new user.A single compromised Docker container can threaten all other containers as well as the underlying host, underscoring the importance of securing Docker.Is the docker daemon running How to Solve Ubuntu20. However, building apps using Docker containers also introduces new security challenges and risks. According to Gartner, by 2020, more than 50% of global organizations will be running containerized applications in production. Containerization has many benefits and as a result has seen wide adoption. The first was for Docker Business subscriptions that add features such as controlling containers that can be accessed via Docker Hub and the ability to handle developer onboarding. On August 31, 2021, Docker released a press release and a blog post outlining a few changes.

Finally, we provide you with 11 key security questions your container security platform should be able to answer, giving you the insights and protection you need to run containers and Kubernetes securely in production. This article focuses on container security by highlighting Docker container security risks and challenges as well as providing best practices for hardening your environment during the build and deploy phases and protecting your Docker containers during runtime.We also share best practices for securing Kubernetes, given its massive adoption and critical role in orchestrating containers. We have briefly covered host security in a previous blog article. Go to localhost: 9000 and there should be a running instance with admin as default login details.Securing Docker can be loosely categorized into two areas: securing and hardening the host so that a container breach doesn’t also lead to host breach, and securing Docker containers. Docker run -name sonarqube -restart always -p 9000:9000 -d sonarqube.Containers have short life spans, so monitoring them, especially during runtime, can be extremely difficult. Images can also contain vulnerabilities that can spread to all containers that use the vulnerable image. Containers rely on a base image, and knowing whether the image comes from a secure or insecure source can be challenging. Containers enable microservices, which increases data traffic and network and access control complexity. Containerization introduces several new challenges that must be addressed. Security for that infrastructure involved securing your application and the host it’s running on and then protecting the application as it runs. Finally, we provide you with 11 key security questions your container security platform should be able to answer, giving you the insights and protection you need to run containers and Kubernetes securely in production. This article focuses on container security by highlighting Docker container security risks and challenges as well as providing best practices for hardening your environment during the build and deploy phases and protecting your Docker containers during runtime.We also share best practices for securing Kubernetes, given its massive adoption and critical role in orchestrating containers. We have briefly covered host security in a previous blog article. Go to localhost: 9000 and there should be a running instance with admin as default login details.Securing Docker can be loosely categorized into two areas: securing and hardening the host so that a container breach doesn’t also lead to host breach, and securing Docker containers. Docker run -name sonarqube -restart always -p 9000:9000 -d sonarqube.Containers have short life spans, so monitoring them, especially during runtime, can be extremely difficult. Images can also contain vulnerabilities that can spread to all containers that use the vulnerable image. Containers rely on a base image, and knowing whether the image comes from a secure or insecure source can be challenging. Containers enable microservices, which increases data traffic and network and access control complexity. Containerization introduces several new challenges that must be addressed. Security for that infrastructure involved securing your application and the host it’s running on and then protecting the application as it runs.

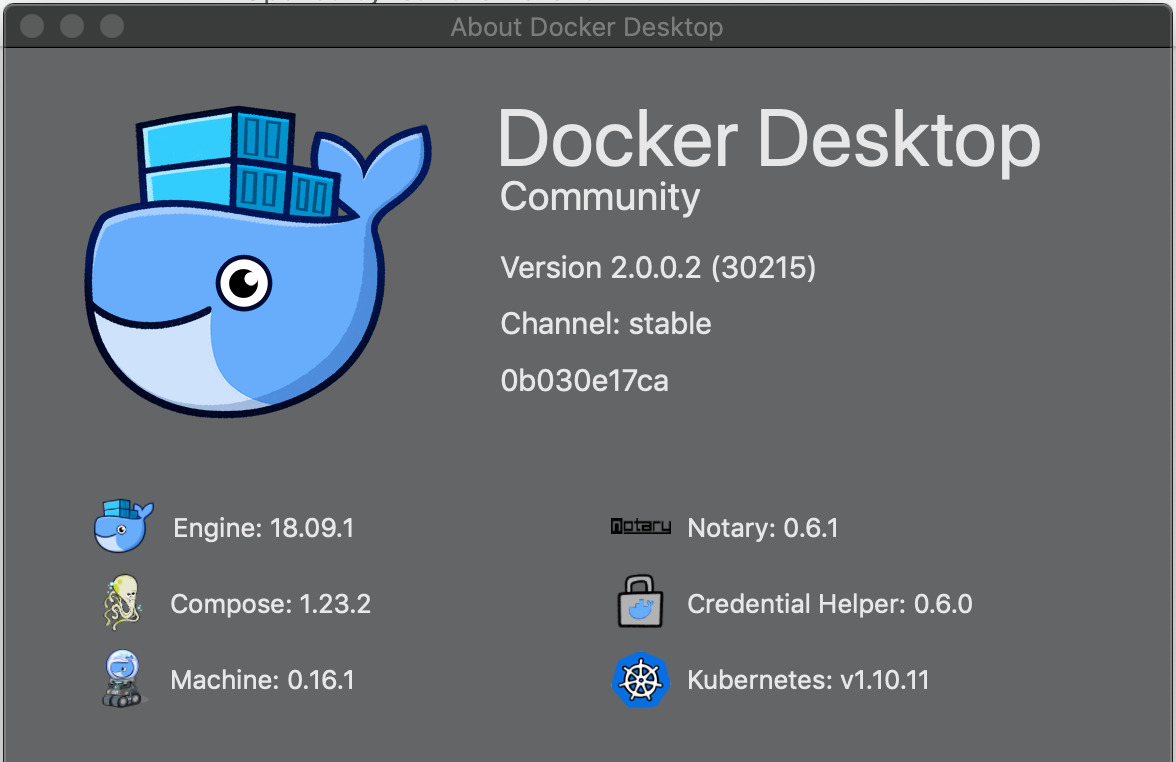

Docker Multiple Users Install GitLab OnCan you tell which deployments or clusters are affected by a high-severity vulnerability? Are any exposed to the Internet? What’s the blast radius if a given vulnerability is exploited? Is the container running in production or a dev/test environment? Containerized environments have many more components than traditional VMs, including the Kubernetes orchestrator that poses its own set of security challenges. A single compromised container can lead to other containers being compromised. Containers, unlike VMs, aren’t necessarily isolated from one another. Many of the traditional components that helped demonstrate compliance, such as firewall rules, take a very different form in a Docker environment. As one of the biggest security drivers, compliance can be a particular challenge given the fast-moving nature of container environments. Are containers running with heightened privileges when they shouldn’t? Are images launching unnecessary services that increase the attack surface? Are secrets stored in images? Make sure you have rules in place that give you an audit trail for: Check out this article for more information about decreasing your Docker daemon attack surface. Allow only trusted users control of the Docker daemon by making sure only trusted users are members of Docker group. The runC vulnerability from earlier this year, for example, was quickly patched soon after its discovery with the release of Docker version 18.09.2. Always use the most up to date version of Docker. As a best practice, run your containers as a non-root user (UID not 0). Another step you can take to minimize a privilege escalation attack is to remove the setuid and setgid permissions in the images. By default, containers are allowed to acquire new privileges so this configuration must be explicitly set. Disallow containers from acquiring new privileges. If you are using containers without an explicit container user defined in the image, you should enable user namespace support, which will allow you to re-map container user to host user. Use registries that have a valid registry certificate or ones that use TLS to minimize the risk of traffic interception.

Stale images or images that haven’t been scanned recently should be rejected or rescanned before moving to build stage. Implement a strong governance policy that enforces frequent image scanning. BusyBox and Apline are two options for building minimal base images. Having fewer components in your container reduces the number of available attack vectors, and a minimal image also yields better performance because there are fewer bytes on disk and less network traffic for images being copied. When a secret is required, use a secrets management tool. By default, you’re allowed to store secrets in Dockerfiles, but storing secrets in an image gives any user of that image access to the secret. Don’t store secrets in images/Dockerfiles. Don’t mount sensitive host system directories on containers, especially in writable mode that could expose them to being changed maliciously in a way that could lead to host compromise. This flag also overwrites any rules you set using CAP DROP or CAP ADD. Don’t run containers with -privileged flag, as this type of container will have most of the capabilities available to the underlying host. You can use Docker’s CAP DROP capability to drop a specific container’s capabilities (also called Linux capability), and use CAP ADD to add only those capabilities required for the proper functioning of the container. By default, Docker maps container ports to one that’s within the 49153–65525 range, but it allows the container to be mapped to a privileged port. Don’t map any ports below 1024 within a container as they are considered privileged because they transmit sensitive data. By default, the ssh daemon will not be running in a container, and you shouldn’t install the ssh daemon to simplify security management of the SSH server. Any changes made to the root filesystem will likely be for a malicious objective. Once running, containers don’t need changes to the root filesystem. Set the container’s root filesystem to read-only. By default, Docker containers share their resources equally with no limits. Specify the amount of memory and CPU needed for a container to operate as designed instead of relying on an arbitrary amount. Don’t share the host’s network namespace, process namespace, IPC namespace, user namespace, or UTS namespace, unless necessary, to ensure proper isolation between Docker containers and the underlying host. Emulator mac redditImposing PID limits also prevents fork bombs (processes that continually replicate themselves) and anomalous processes. Limiting the number of processes in the container prevents excessive spawning of new processes and potential malicious lateral movement. Putting limits on PIDs effectively limits the number of processes running in each container. Each process in the kernel carries a unique PID, and containers leverage Linux PID namespace to provide a separate view of the PID hierarchy for each container. One of the advantages of containers is tight process identifier (PID) control.

0 Comments

Leave a Reply. |

Details

AuthorKevin ArchivesCategories |

RSS Feed

RSS Feed